Déjà Vu: What AI Adoption Can Learn from a Decade of Test Automation

In her session, Kayla Gillman, Senior Manager – Canada and Global Head of Pre-Sales at TTC Global, explored the parallels between today’s AI adoption and the earlier rise of test automation, highlighting the recurring challenges teams face when integrating emerging technologies into their practices.

History Is Repeating Itself

While the rise of Artificial Intelligence presents new challenges for QE and testing professionals, it is not the first wave of transformation our industry has navigated. There is much to learn from previous innovations and the patterns that shaped them.

At a recent Canadian Quality and Testing Association event, Kayla Gillman, Senior Manager – Canada and Global Head of Pre-Sales at TTC Global, led a session exploring this very topic. She highlighted the parallels between today’s AI adoption and the earlier rise of test automation and how, as with any emerging technology, teams encounter recurring challenges when integrating AI into their practices.

Kayla opened her talk asking 3 simple questions to the audience:

- Companies today are all about gaining efficiency through _________.

- We’re investing heavily in ________________ to cut costs and increase quality.

- Most organizational ________________ initiatives are failing to deliver value.

Are these questions about Test Automation or Artificial Intelligence?

The room quickly realized the punchline: these statements about AI in 2025 could apply to Test Automation in 2015. Even with a decade between these two major shifts to the QE industry, the language of innovation hasn’t changed. But just as Automauton and AI share the same promises of speed, efficiency, and accuracy, they also share the same risk: failure without foundations.

What We Learned From Test Automation

Automation transformed how quality teams worked but not always how they thought it would. This would often lead to people believing that automation was not worth the hassle.

Data from Capgemini’s World Quality Report (2020/21) and ASUG’s automation survey reinforced what many testers already thought:

- 95% of enterprises reported only low-to-medium automation maturity.

- 47% struggled to align tools.

- 37% said their Agile teams lacked professional test expertise.

- 73% of automation projects failed to deliver promised ROI.

So why did automation so often stall? Kayla identified four recurring causes:

- Low organizational readiness – trying to automate chaos.

- Tool and strategy misalignment – buying before defining purpose.

- Lack of skills and enablement – automation left to specialists, not shared practice.

- Cultural and leadership gaps – focusing on coverage, not quality.

The takeaway: "Automation didn’t fail because of the tools; it failed because we automated before we were ready."

Test Automation initiatives saw the most success when the proper foundations were put in place.

Déjà Vu With AI

Fast-forward to 2025 and the same pattern is emerging. MIT and Fortune report that 95 % of AI pilots are failing to deliver measurable business value. Projects also get stuck in pilot purgatory, trapped between overhype and under-preparedness.

Common symptoms include:

- Generic models used for specialized problems.

- Unclear goals and governance.

- Integration friction.

- Shortages of skilled talent.

- A focus on technology over people.

Sound familiar? Many organizations have experienced this all before during the rise of test automation.

Tools Don’t Transform – Practices Do

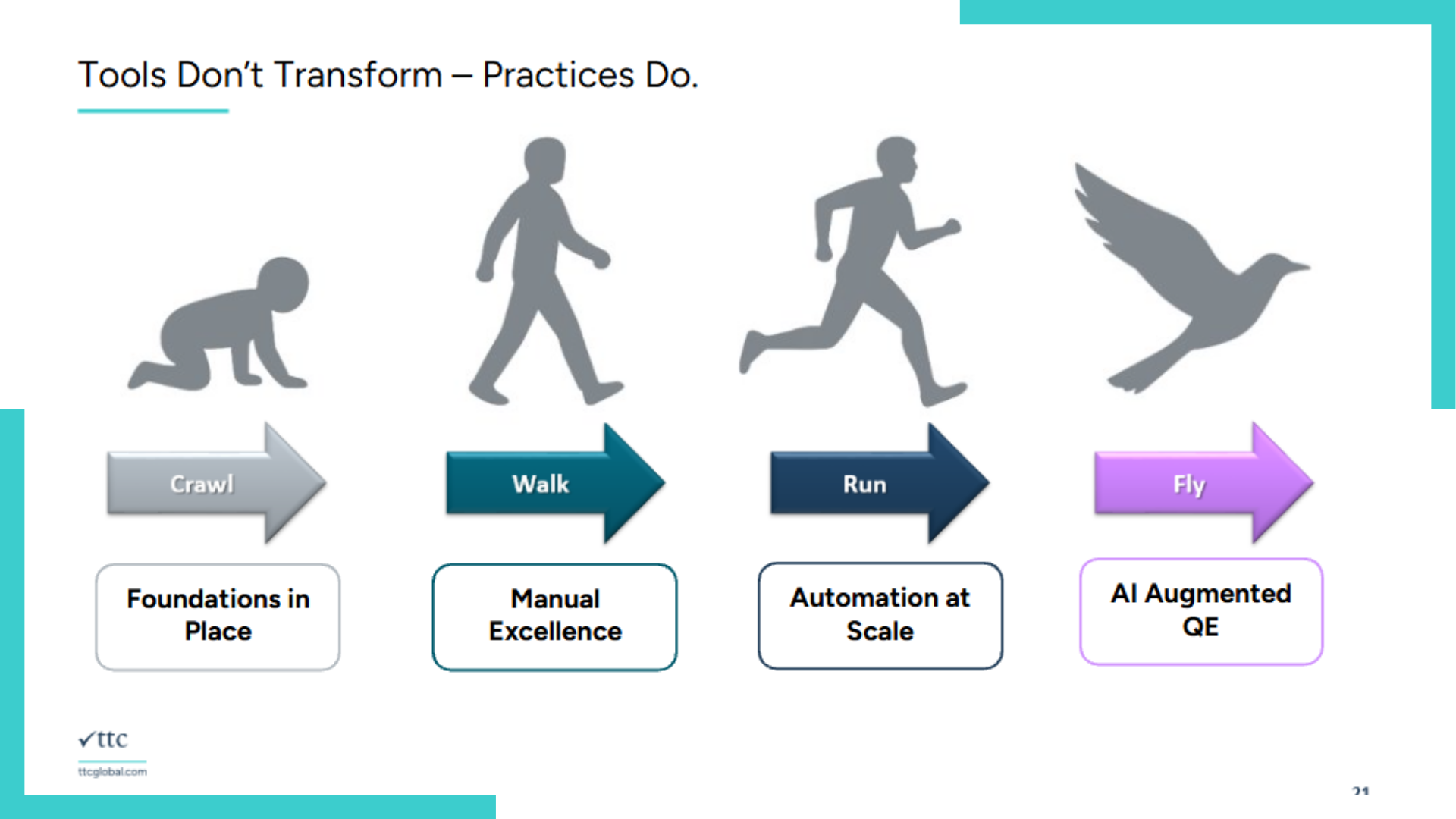

One of the most quoted moments of Kayla’s talk came when she displayed a simple visual:

Each stage represented maturity, not technology.

- Crawl: Foundations in place

- Walk: Manual excellence

- Run: Automation at scale

- Fly: AI-augmented Quality Engineering

Nobody can fast-forward straight to flying; it's too much, too fast and will result in many unexpected failures. The same is true for adopting new tools. In most cases, automation doesn’t fail because of the tools themselves. It fails because we automate before we are ready. And now, a decade later, we’re doing the exact same thing with AI.

Though many of us want to soar with AI, it's critical that we first learn to run with automation, and learning to run with automation requires building a foundation of strong quality practices to launch from. As the building blocks for success are put into place, the impact these new tools can have on our QE projects multiplies.

As Kayla noted during the talk, 'we’re past the peak of inflated expectations. We’re in the trough of disillusionment. But if we do this right, we’ll be heading toward enlightenment.'

What Teams Got Right … and What AI Can Learn

There are quite a few lessons from our last transformation with test automation that can be used to improve our usage of AI today. This concise list reframes a decade of lessons into what we got right and what we can learn from.

| What We Got Right | What We Got Wrong |

|---|---|

| We automated repetitive tasks | We tried to automate everything |

| We encouraged innovation | We chased the "one tool to rule them all" |

| We proved ROI through measurable speed and consistency | We ignored process alignment and skills |

In many cases, we tried to over-automate and use test automation tools to replace human judgment and critical thinking. That was often where challenges and roadblocks occurred. With AI, this has become an even bigger temptation.

But the truth is, AI should free testers to think, not think for them.

Success will come from the teams who pair human judgment with machine capability.

Learning from Our Mistakes

Key themes found in automation mistakes can be used as stepping stones towards a more effective and impactful AI transformation.

Lesson 1: Don't Automate Everything (Value Over Volume)

In the past, automation success was measured by percentage coverage and script count. Teams tried to automate every test case instead of automating the right test cases. The same mindset is emerging with AI adoption.

There is an expectation that autonomous agents will generate all tests, execute them, and report defects flawlessly. QA leaders must shift the focus from “more automation” or "more AI" to “better outcomes.” More specifically, Kayla suggested:

- Start with risk-based priorities.

- Define high-value scope first.

- Use AI to remove low-value work such as data setup and documentation, not strategy or oversight.

Lesson 2: Don't Chase the Silver Bullet Tool

Automation programs often chased the next big framework. Tools were bought on hype, replaced every 18 months, and left behind to collect dust on the metaphorical shelf. Sound familiar?

Today, AI platforms are being purchased with promises of “fully autonomous testing” and "instant" transformation. When teams fail to see these promises come to fruition, they become jaded with the tool and begin the hunt for something new.

The truth is, there is no magic tool. The fix is testing discipline. Kayla suggested:

- Anchor decisions to outcomes, not vendors.

- Build clear business cases.

- Run small proofs of value before committing to platform-wide adoption.

Lesson 3: Don't Ignore Process and Skills (i.e., don't get a Ferrari no one can drive)

Automation failed when it was treated as an add-on instead of a redesign of how testing works. There was no enablement, no ownership, and no process alignment.

Many organizations are at risk of making the same mistake with AI. Tools are being introduced without governance, data readiness, or AI literacy. When teams lack trust and clarity, the adoption process becomes that much more difficult.

QA leaders must invest in readiness before scale. Some ways to do this include:

- Build AI literacy programs.

- Define governance and guardrails around ethics, data quality, and model evaluation.

- Assign ownership and manage the change deliberately.

Key Takeaways

It's understandable to be skeptical about the various hype-filled claims surrounding AI. Being overly cautious could prevent you from realizing the true, tangible benefits artificial intelligence can bring to software testers and QE professionals. With the adoption of AI, Kayla noted, we have the chance to learn from history before we repeat it.

But in order to reap those benefits effectively, we must learn from the mistakes made during the automation era.

Prioritize value over volume, outcomes over hype, and readiness over speed.

Kayla’s closing challenge resonated strongly with the audience:

“Let’s avoid the mistakes we made before. Don’t measure success by how many AI-generated tests you create. Build governance early — around data, ethics, and oversight. Invest in AI literacy for testers. And keep human judgment central to the process.”

Remember; digital transformation doesn’t fail because of technology. It fails because of bad practice and poor organizational buy-in.

For a deeper dive into this session, access Kayla's full presentation on AI Adoption here.